automation

Terraform and Github actions for Vrising hosting on AWS

It’s been awhile since the last time I play Vrising, but I think this would be a good project for me to get my hands on setting up a CICD pipeline with terraform and github actions (an upgraded version from my AWS Vrising hosting solution).

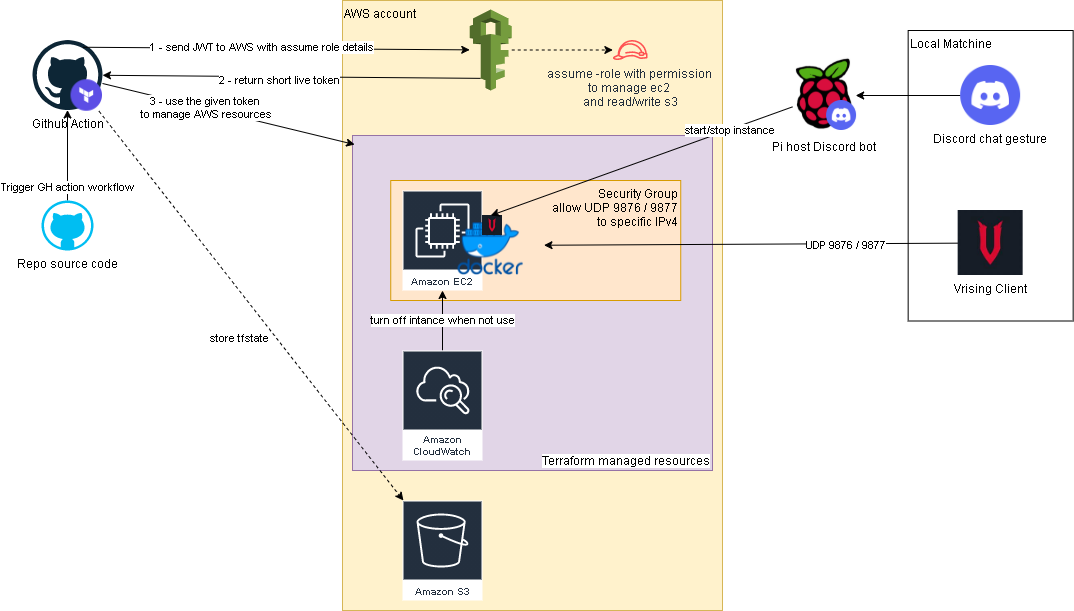

There are a few changes to the original solution, first one is the use of vrising docker image (thanks to TrueOsiris), instead of manually install vrising server to the ec2 instance. Docker container would be started as part of the ec2 user data. Here’s the user data script.

The second change is terraform configurations turning all the manual setup processes into IaC. Note, on the ec2 instance resource, we have a ‘home_cdir_block’ variable, referencing an input from github actions secret. So then only the IPs in ‘home_cdir_block’ can connect to our server. Another layer of protection is the server’s password in user data script which also getting input from github secret variable.

Terraform resources would then get deploy out by github actions with OIDC configured to assume a role in AWS. The configuraiton process can be found here. The IAM role I set up for this project is attached with ‘AmazonEC2FullAccess’ and the below inline policy:

1 | { |

Oh I forgot to mention, we also need an S3 bucket create to store the tfstate file as stated in _provider.tf.

Below is an overview of the upgraded solution.

Github repo: https://github.com/tduong10101/Vrising-aws

Forza log streaming to Opensearch

In this project I attempted to get forza log display in ‘real time’ on AWS Opensearch (poorman splunk). Below is a quick overview of how to the log flow and access configurations.

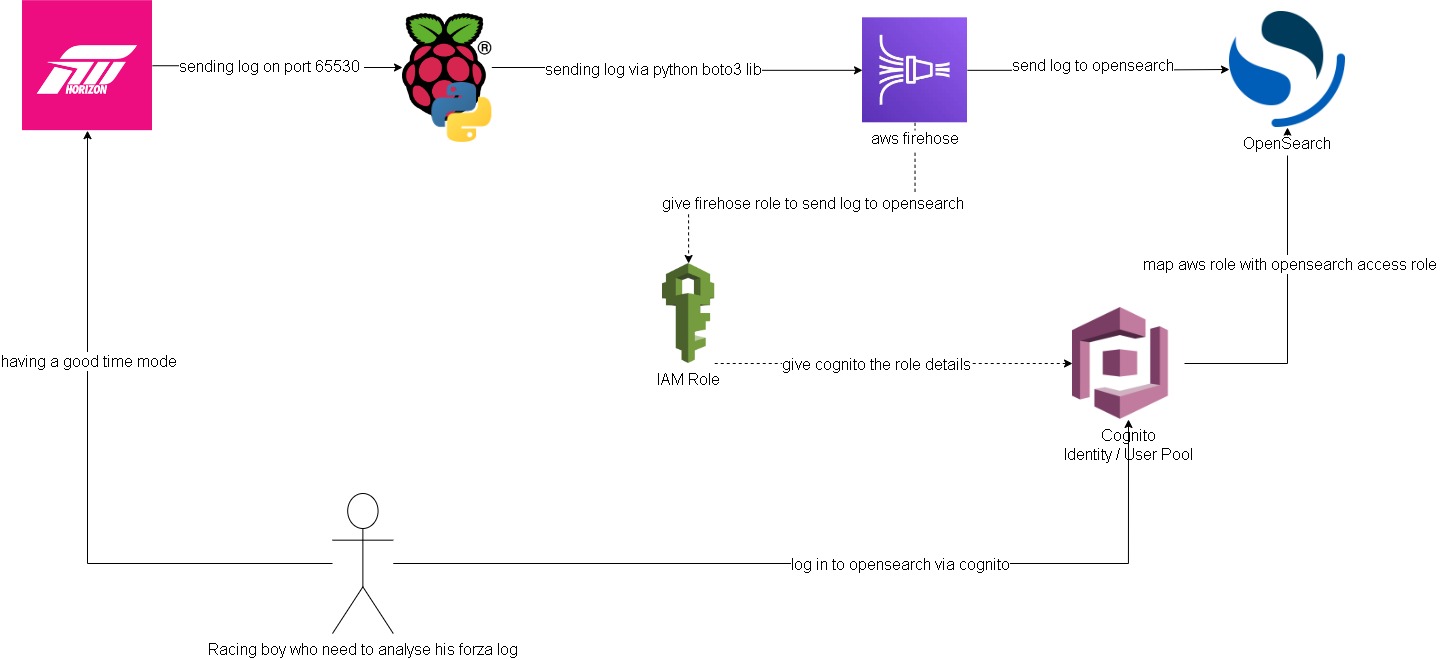

Forza -> Pi -> Firehose data stream:

Setting up log streaming from forza to raspberry pi is quite straight forward. I forked jasperan forza-horizon-5-telemetry-listener repo and updated it with a delay functionality and also a function to send the log to aws firehose data stream (forked repo). Then I just got the python script run while I’m playing on forza

Opensearch/Cognito configuration:

Ok this is the hard part. I spent most of the time on this. Firstly, to have firehose stream data into opensearch I need to somehow map the aws access roles to opensearch roles. ‘Fine grain access’ option will not work, we can either use SAML to hook up to an idp or use the inhouse aws cognito. Since I don’t have an existing idp, I had to setup cognito identity pool and user pool. From there I can then give the admin opensearch role to cognito authenticate role which assigned to a user pool group.

Below are some screenshots on opensearch cluster and cognito. Also thanks to Soumil Shah his video on setting up congito with opensearch helped alot. Here’s the link.

opensearch configure

opensearch security configure

opensearch role

Note: all this can be by pass if I choose to send the log straight to opensearch via https rest. But then it would be too easy ;)

Note 2: I’ve gone with t3.small.search which is why I put real-time in quotation

Firehose data stream -> firehose delivery stream -> Opensearch:

Setting up delivery stream is not too hard. There’s a template for sending log to opensearch. Just remember to give it the right access role that.

Here is what it looks like on opensearch:

I’m too tired to build any dashboard for it. Also the timestamp from the log didn’t get transform into ‘date’ type, so I’ll need to look into it at another time.

Improvements:

- docker setup to run the python listener/log stream script

- maybe stream log straight to opensearch for real time log? I feel insecure sending username/password with the payload though.

- do this but with splunk? I’m sure the indexing performance would be much better. There’s arealdy an addon for forza on splunk, but it’s not available on splunk cloud. The addon is where I got the idea for this project.

Spotify - Time machine

“Music is the closest thing we have to a time machine”

Want to go back in time and listen to popular songs of that time? With a bit of web scraping, it is possible search/create a playlist of top songs in spotify with any given date in the last 20 years.

Where does the idea come from?

This is one of the projects in the 100 days of python challenge - here is the link.

How does it work?

This script would scrap for top 100 billboard songs on user’s input date. Then it would create a spotify playlist with following title format “hot-100-

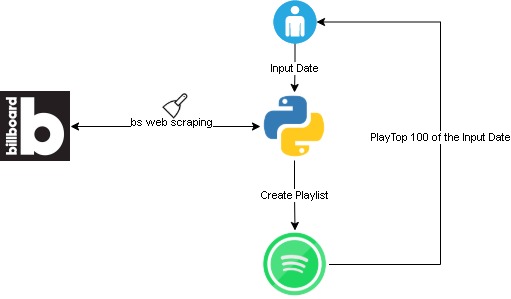

To set it up please follow “getting started” in this git repo. Here is an overview flow:

Challenges

The spotify api authenticate integration is complex and hard to get my head around. I ended up using spotipy python module instead of directly request to spotify api.

Took me a bit of time to figure out the billboard site structure. Overall not too bad, I cheated a bit and checked the solution. However, this is my own code version.

Duplicate playlist! Not sure if this is a bug, but playlist created by the script will not show in user_playlists(). So if a date is re-input, a duplicate playlist will get created.

Get AWS IAM credentials report script

Quick powershell script to generate and save AWS IAM credentials report to csv format on a local location.

1 | Import-Module AWSPowerShell |