python

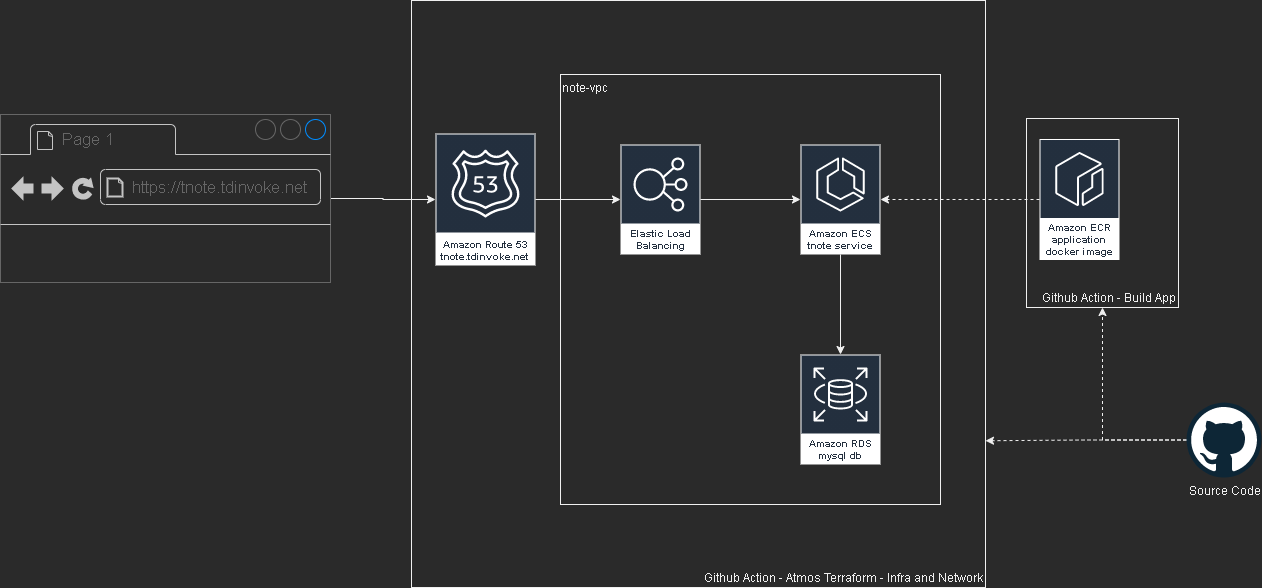

Flask Note app with aws, terraform and github action

This project is part of a mentoring program from my current work - Vanguard Au. Thanks Indika for the guidance and supports through this project.

Please test out the app here: https://tnote.tdinvoke.net

Flask note app

Source: https://github.com/tduong10101/tnote/tree/env/tnote-dev-apse2/website

Simple flask note application that let user sign-up/in, create/delete notes. Thanks to Tech With Tim for the tutorial.

Changes from the tutorial

Moved the db out to a mysql instance

Setup .env variables:

1 | from dotenv import load_dotenv |

connection string:

1 | url=f"mysql+pymysql://{SQL_USERNAME}:{SQL_PASSWORD}@{SQL_HOST}:{SQL_PORT}/{DB_NAME}" |

update create_db function as below:

1 | def create_db(url,app): |

Updated encryption method to use ‘scrypt’

1 | new_user=User(email=email,first_name=first_name,password=generate_password_hash(password1, method='scrypt')) |

Added Gunicorn Server

1 | /home/python/.local/bin/gunicorn -w 2 -b 0.0.0.0:80 "website:create_app()" |

Github Workflow configuration

Source: https://github.com/tduong10101/tnote/tree/env/tnote-dev-apse2/.github/workflows

Github - AWS OIDC configuration

Follow this doco to configure OIDC so Github action can access AWS resources.

app

Utilise aws-actions/amazon-ecr-login couple with OIDC AWS to configure docker registry.

1 | - name: Configure AWS credentials |

This action can only be triggered manually.

network

Source: https://github.com/tduong10101/tnote/blob/env/tnote-dev-apse2/.github/workflows/network.yml

This action cover aws network resource management for the app. It can be triggered manually, push and PR flow.

Here the trigger details:

| Action | Trigger |

|---|---|

| Atmos Terraform Plan | Manual, PR create |

| Atmos Terraform Apply | Manual, PR merge (Push) |

| Atmos Terraform Destroy | Manual |

Auto trigger only apply on branch with “env/*“

infra

Source: https://github.com/tduong10101/tnote/blob/env/tnote-dev-apse2/.github/workflows/infra.yml

This action for creating AWS ECS resources, dns record and rds mysql db.

| Action | Trigger |

|---|---|

| Atmos Terraform Plan | Manual, PR create |

| Atmos Terraform Apply | Manual |

| Atmos Terraform Destroy | Manual |

Terraform - Atmos

Atmos solve the missing param management piece over multi stacks for Terraform.

name_pattern is set with: {tenant}-{state}-{environment} example: tnote-dev-apse2

Source: https://github.com/tduong10101/tnote/tree/env/tnote-dev-apse2/atmos-tf

Structure:

1 | . |

Issue encoutnered

Avoid service start deadlock when start ecs service from UserData

Symptom: ecs service is in ‘inactive’ status and run service command stuck when manually run on the ec2 instance.

1 | sudo systemctl enable --now --no-block ecs.service |

Ensure rds and ecs are in same vpc

Remember to turn on ecs logging by adding the cloudwatch loggroup resource.

Error:

1 | pymysql.err.OperationalError: (2003, "Can't connect to MySQL server on 'terraform-20231118092121881600000001.czfqdh70wguv.ap-southeast-2.rds.amazonaws.com' (timed out)") |

Don’t declare db_name in rds resource block

This is due to the note app has a db/table create function, if the db_name is declared in terraform it would create an empty db without the required tables. Which would then resulting in app fail to run.

Load secrets into atmos terraform using github secret and TFVAR

Ensure sensitive is set to true for the secret. Use github secret and TF_VAR to load the secret into atmos terraform TF_VAR_secret_name={secrets.secret_name}

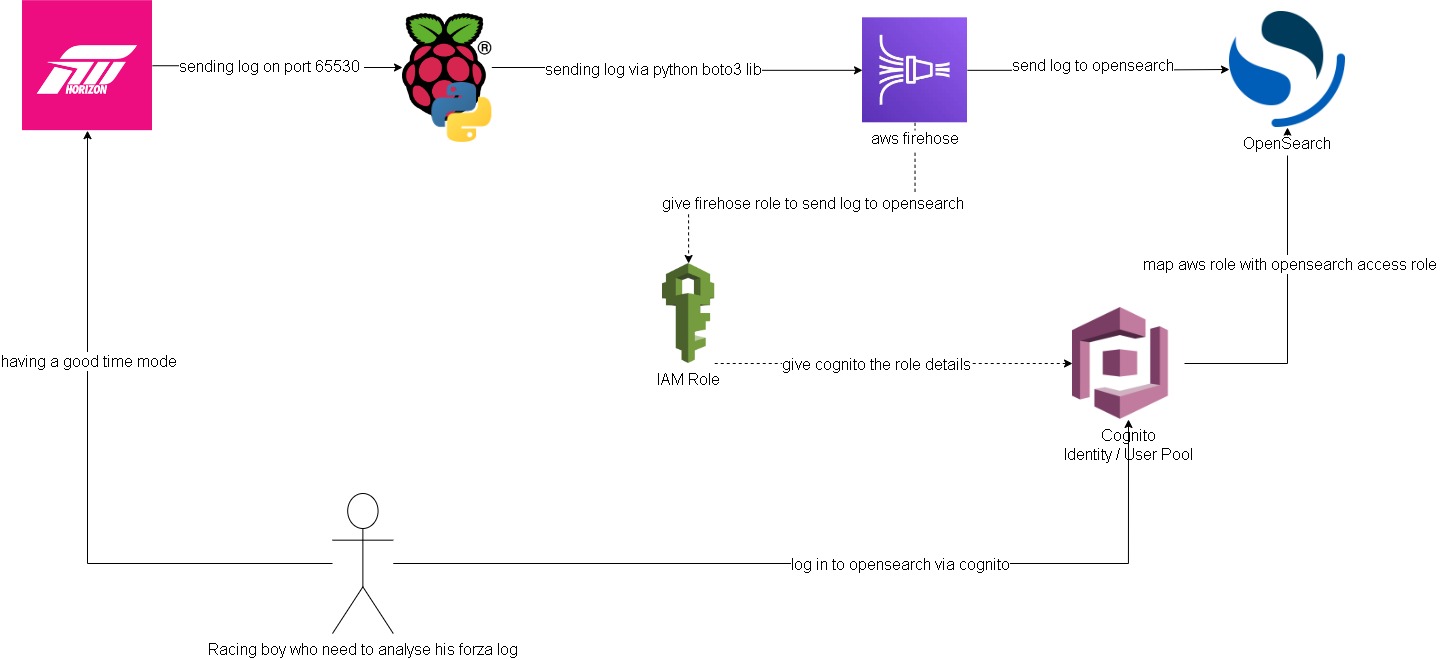

Forza log streaming to Opensearch

In this project I attempted to get forza log display in ‘real time’ on AWS Opensearch (poorman splunk). Below is a quick overview of how to the log flow and access configurations.

Forza -> Pi -> Firehose data stream:

Setting up log streaming from forza to raspberry pi is quite straight forward. I forked jasperan forza-horizon-5-telemetry-listener repo and updated it with a delay functionality and also a function to send the log to aws firehose data stream (forked repo). Then I just got the python script run while I’m playing on forza

Opensearch/Cognito configuration:

Ok this is the hard part. I spent most of the time on this. Firstly, to have firehose stream data into opensearch I need to somehow map the aws access roles to opensearch roles. ‘Fine grain access’ option will not work, we can either use SAML to hook up to an idp or use the inhouse aws cognito. Since I don’t have an existing idp, I had to setup cognito identity pool and user pool. From there I can then give the admin opensearch role to cognito authenticate role which assigned to a user pool group.

Below are some screenshots on opensearch cluster and cognito. Also thanks to Soumil Shah his video on setting up congito with opensearch helped alot. Here’s the link.

opensearch configure

opensearch security configure

opensearch role

Note: all this can be by pass if I choose to send the log straight to opensearch via https rest. But then it would be too easy ;)

Note 2: I’ve gone with t3.small.search which is why I put real-time in quotation

Firehose data stream -> firehose delivery stream -> Opensearch:

Setting up delivery stream is not too hard. There’s a template for sending log to opensearch. Just remember to give it the right access role that.

Here is what it looks like on opensearch:

I’m too tired to build any dashboard for it. Also the timestamp from the log didn’t get transform into ‘date’ type, so I’ll need to look into it at another time.

Improvements:

- docker setup to run the python listener/log stream script

- maybe stream log straight to opensearch for real time log? I feel insecure sending username/password with the payload though.

- do this but with splunk? I’m sure the indexing performance would be much better. There’s arealdy an addon for forza on splunk, but it’s not available on splunk cloud. The addon is where I got the idea for this project.

Hosting VRising on AWS

Quick Intro - “V Rising is a open-world survival game developed and published by Stunlock Studios that was released on May 17, 2022. Awaken as a vampire. Hunt for blood in nearby settlements to regain your strength and evade the scorching sun to survive. Raise your castle and thrive in an ever-changing open world full of mystery.” (vrising.fandom.com)

Hosting a dedicated server for this game is similar to how we set one up with Valheim (Hosting Valheim on AWS). We used the same server tier as Valheim, below are the details.

VRising Server does not officially available for Linux OS, luckily we found this guide for setting it up on Centos. Hence we used a centos AMI on “community AMIs”.

- AMI: ap-southeast-2 image for x86_64 CentOS_7

- instance-type: t3.medium

- vpc: we're using default vpc created by aws on our account.

- storage: 8gb.

- security group with the folowing rules:

- Custom UDP Rule: UDP 9876 open to our pc ips

- Custom UDP Rule: UDP 9877 open to our pc ips

- ssh: TCP 22 open to our pc ips

We are using the same cloudwatch alarm as Valheim to turn off the server when there’s no activity.

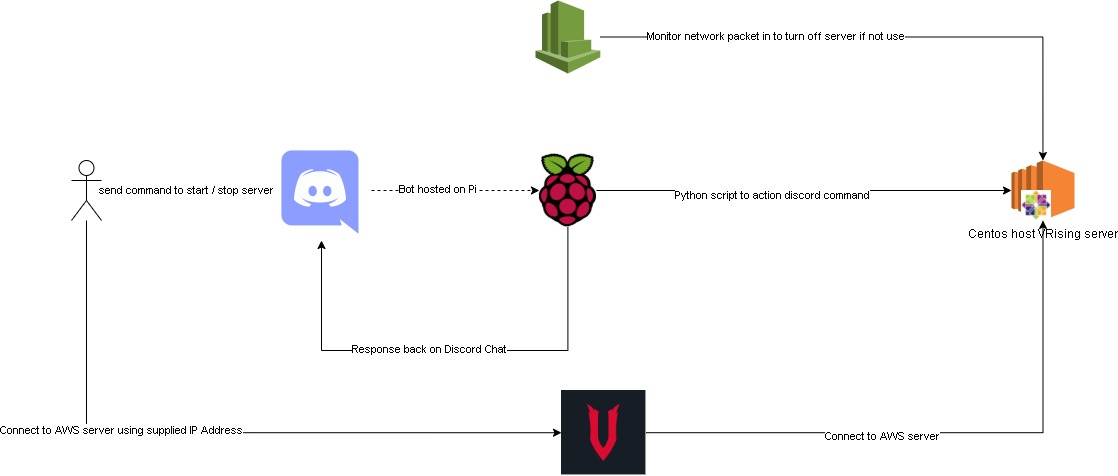

This time we also added a new feature, using discord bot chat to turn the aws server on/off.

This discord bot is hosted on one of our raspberry pi. Here is the bot repo and below is the flow chart of the whole setup.

Improvements:

Maybe cloudwatch alarm can be set with a lambda function. Which would send a notification to our discord channel letting us know that the server got turn off by cloudwatch.

We considered using Elastic IP so the server can retain its ip after it got turn off. But we decided not to, as we wanted to save some cost.

It has been awhile since we got together and work on something this fun. Hopefully, we’ll find more ideas and interesting projects to work together. Thanks for reading, so long until next time.

Spotify - Time machine

“Music is the closest thing we have to a time machine”

Want to go back in time and listen to popular songs of that time? With a bit of web scraping, it is possible search/create a playlist of top songs in spotify with any given date in the last 20 years.

Where does the idea come from?

This is one of the projects in the 100 days of python challenge - here is the link.

How does it work?

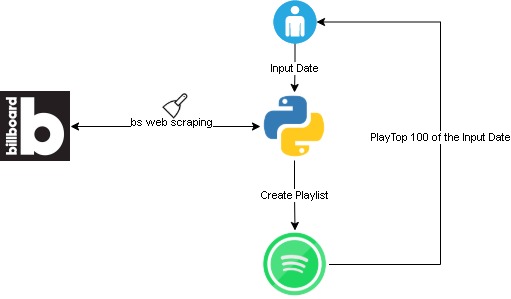

This script would scrap for top 100 billboard songs on user’s input date. Then it would create a spotify playlist with following title format “hot-100-

To set it up please follow “getting started” in this git repo. Here is an overview flow:

Challenges

The spotify api authenticate integration is complex and hard to get my head around. I ended up using spotipy python module instead of directly request to spotify api.

Took me a bit of time to figure out the billboard site structure. Overall not too bad, I cheated a bit and checked the solution. However, this is my own code version.

Duplicate playlist! Not sure if this is a bug, but playlist created by the script will not show in user_playlists(). So if a date is re-input, a duplicate playlist will get created.